One of the most remarkable things about life on earth, in all its forms, is how cells often only tens of microns in diameter have evolved to carry out the variety of tasks that they do. In multicellular organisms, the situation is even more complicated, as different cell types need to work together in an orchestrated manner in functional units such as tissues and organs to maintain the health of the organism. Ultimately, therefore, it is the function of individual cells in our body that determines our health, and our susceptibility to disease and infection. The discipline of cell biology serves to understand how cells work, and importantly what goes wrong in cells to cause disease. It is a discipline, with associated technologies, positioned at the centre of all fundamental biomedical research.

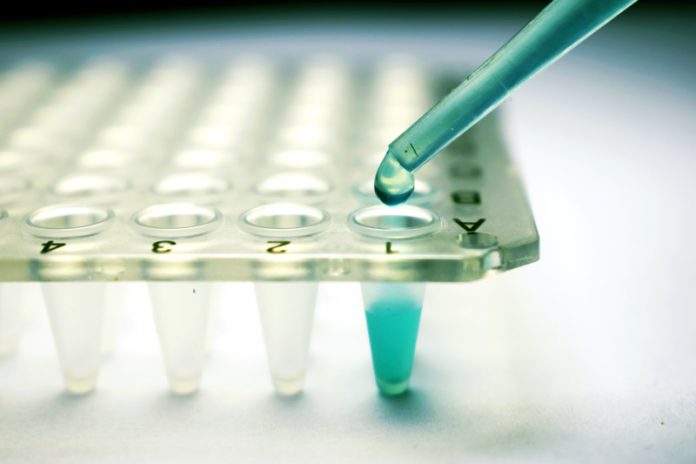

Since the mid-seventeenth century microscopy has been the primary tool for scientists to reveal the structure and organisation of cells, both in isolation and in their ‘social’ context. In the late twentieth and early twenty-first centuries however, the widespread application and integration of fluorescence technologies with microscopy have provided new opportunities to reveal the innermost workings of cells. Fluorescence microscopy allows researchers to potentially view not only the cellular organelles but also the billions of molecules – in particular, proteins – that work together to provide the cell with its functionality. Therefore, in this post-genome sequencing age, how can we assign a discrete function to each of the 22,000 human genes and the proteins that they encode?

Furthermore, how can we identify those proteins that can cause a particular disease and those proteins that can have protective properties? Carrying out such experiments in intact and preferably living cells has obvious benefits, but clearly, the scale of such experiments is challenging. Simply visualising each protein in turn (and molecular techniques in principle make this possible) requires 22,000 individual microscopy images, and so without considering any further complexity of the experiment or replicates we would need to image almost every well from 230 96-well plates in a consistent manner. Even if this is achievable, the next problem becomes one of how to interpret the images, and in such a way that we can objectively compare them. The issues are experimental scale and complexity of information.

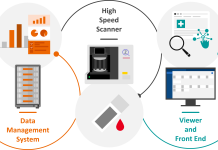

In the last ten years, this experimental approach has become a reality, and these barriers are being overcome, encompassed in a technology termed ‘high content screening and analysis’ – HCS / HCA. This is a fusion technology, combining lab automation, particularly in terms of the microscopy, with sophisticated software routines capable of analysing images of millions of individual cells (‘high throughput’) and extracting user-defined quantitative information (‘content’) for each cell. Since its development, labs around the world have embraced its power to address both fundamental cell biology questions and applications relevant to human health and disease. HCS can and has been used to rapidly screen massive libraries of chemical compounds to identify leads with desired cellular phenotypes, to identify host factors associated with virus infection, and reveal new triggers for cancer cell development. For cell biologists, it has proved to be a particularly powerful technique when combined with RNA interference (RNAi), a molecular technique that allows researchers to inactivate genes and the proteins that they encode in a systematic manner. Carrying out RNAi experiments in an HCS format effectively allows us to dissect the function of each gene/protein in turn with respect to a particular biological question, with the output being images of cells revealing the phenotype, and also their quantitative analysis.

In the Cell Screening Lab at UCD ( www.ucd.ie/hcs ) we have been developing and applying HCS strategies for a number of years to address questions related to how cells transport material (cargo) between their various internal organelles. Understanding how these membrane transport processes work is of vital importance, as all cargo inside cells will only facilitate cell function if it is located in the correct place. For example, signalling receptors at the cell surface are actually synthesised and assembled in internal membranes of the endoplasmic reticulum, requiring transport through intermediate organelles for further processing prior to delivery to the cell surface. Many human diseases are associated with mistargeting of such receptors – cystic fibrosis is a well-known example. Our ongoing mission is to use RNAi at a whole genome scale to systematically dissect how such transport pathways are regulated, and ultimately to use this information to gain insight into how they can be manipulated.

Improving drug delivery efficacy into cells is one good example of how this approach can be utilised. Ultimately, therefore, we believe that our HCS approaches provide critical information about cell organisation that can be exploited by many branches of biomedical research. There are of course both technological and political challenges to overcome if HCS is to continue providing valuable data to the scientific community. From a technological perspective there is a move towards the use of more complex 3-dimensional multi-cell type models, which although may better represent the in vivo situation, they are more difficult to image and precisely quantify, requiring confocal HCS technology. Politically, HCS is a relatively expensive technique, both in terms of its hardware and the reagents needed to carry out large-scale screens. In the current challenging environment of research grant availability, funding bodies often prioritise more advanced or applied projects that might return again in the short term. Ignoring HCS projects would be foolish, as they show real promise to deliver advanced cell biology knowledge that will inform and drive the direction of future biomedical research.

Prof. Jeremy C. Simpson

Cell Screening Laboratory

University College Dublin (UCD)

jeremy.simpson@ucd.ie