Here, Professor Kensuke Harada discusses the implementation of robotic manipulation research in the real world

Introduction

Designing robots to perform human tasks is one of the biggest challenges in robotics. Robotics researchers have attempted to solve problems of this sort for many years; however, only a small number of human tasks can be performed by robots thus far. This fact may seem strange, as humans are able to perform most manual tasks in everyday life without any difficulties. Humans are aware of the difficulty of tasks that require delicate force adjustments or fine position adjustments, and humans tend to think that the easy tasks for a human to perform are also easy for robots.

In the homunculus diagram compiled by Penfield, which shows the correspondence between the motor and somatosensory cortices and body parts, the areas of the brain related to fingers and palms occupy one-third of the motor cortex and one-quarter of the sensory cortex. Hence, human hands are often called the second brain. This fact suggests that the functions of hands and fingers, which we realize without thinking, are based on the enormous amount of tacit knowledge stored in the brain. In other words, the key to robotic manipulation research is determining how to allow robots to utilize the tacit knowledge stored in the brain. Studying the functions of robotic hands and fingers is a profound problem directly related to the understanding of human intelligence.

This paper will describe the robotic manipulation research mainly done in the author’s laboratory and its applications. As mentioned above, research on robotic manipulation is academically interesting, but at the same time, it is increasingly relevant to many fields of our daily life and industry.

Academic research

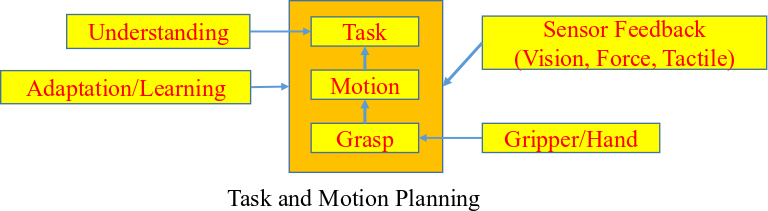

Fig. 1 shows the relationship between the research items necessary for the automatic generation of robotic manipulation motions. One intuitive approach is to apply robotic motion planning. In this figure, a three-layered structure for robotic motion planning is shown in the center. The top level plans the task procedures; for example, if the robot is instructed to perform the target task, this layer plans a sequence of motion to realize the task. Next, the middle layer plans the motion of robotic manipulation to accomplish the target task. Then, the lowest layer plans the posture of the hand to grasp the target object to complete the required task. This three-layered motion planning is called integrated task and motion planning (TAMP). To date, various studies have been conducted using TAMP to automatically generate robotic manipulation motions.1, 2

However, the tasks that can be realized by applying only TAMP are limited. For example, a human could cook a meal by referring to food recipes. A robot must also complete the required task when given human-understandable work instructions, such as food recipes and verbal instructions. In this case, a robot must be equipped with tacit knowledge, like that possessed by humans, and must adaptively employ information not written in the work instructions to carry out the task.

Secondly, we rarely have accurate geometrical information about the surrounding environment or the grasped object’s physical parameters. To compensate for this missing or inaccurate information, we need to provide sensor feedback. We must plan a robot’s motion to compensate for such missing or inaccurate information effectively, and the task must be performed by taking this limitation into account. It is also often necessary to design a specially coordinated hand for each task, and to learn movements from human demonstrations. In addition, although it would be desirable if the robot performed all tasks by equipping a single universal hand attached at the arm tip, this is not always possible in practice. In many cases, we have to carefully design robotic hands that correspond to specific tasks and are unsuited to others.

Fig. 2 shows an example of robotic motion planning where an elastic ring-shaped object is installed in a cylinder using a dual-arm industrial manipulator.3 We planned a sequence of motion for two arms while minimizing the ring’s elastic energy. First, the left hand moves to the target position while the right hand remains stationary. Then, the right hand moves to the target position while the left hand remains stationary. After iterating this operation for a few steps, the robot can install the ring-shaped object in the cylinder.

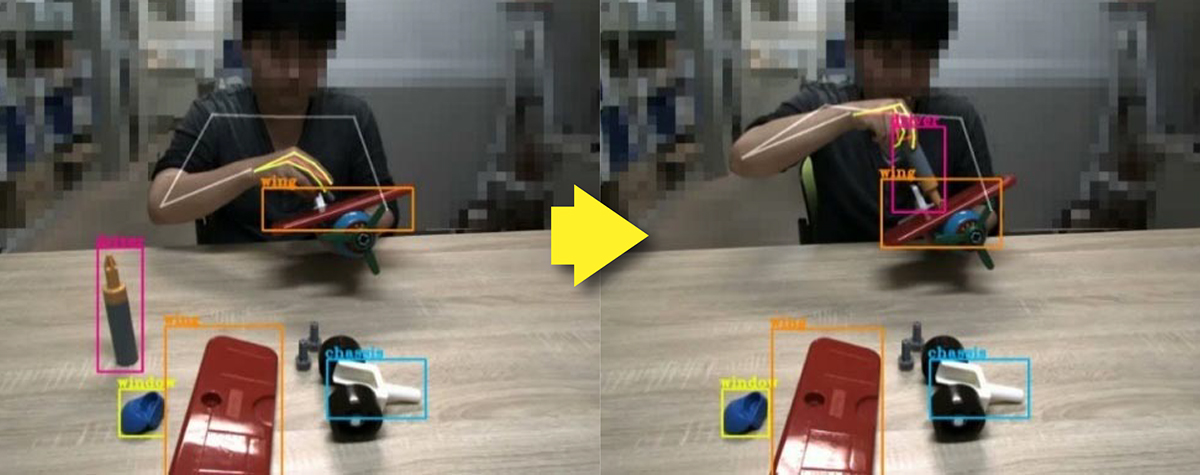

In contrast, Fig. 3 shows an example of understanding a human-performed assembly task for the purpose of transferring it to a robot.4 This figure shows a situation where a human assembles a toy airplane. By capturing a human’s motion while assembling a product, we try to identify what kind of task the human is performing. However, because humans can perform a variety of tasks, we must determine the task being performed from a large number of candidates. To avoid this problem, we first identify the grasped object and then identify the task associated with the grasped object.

Real-world applications

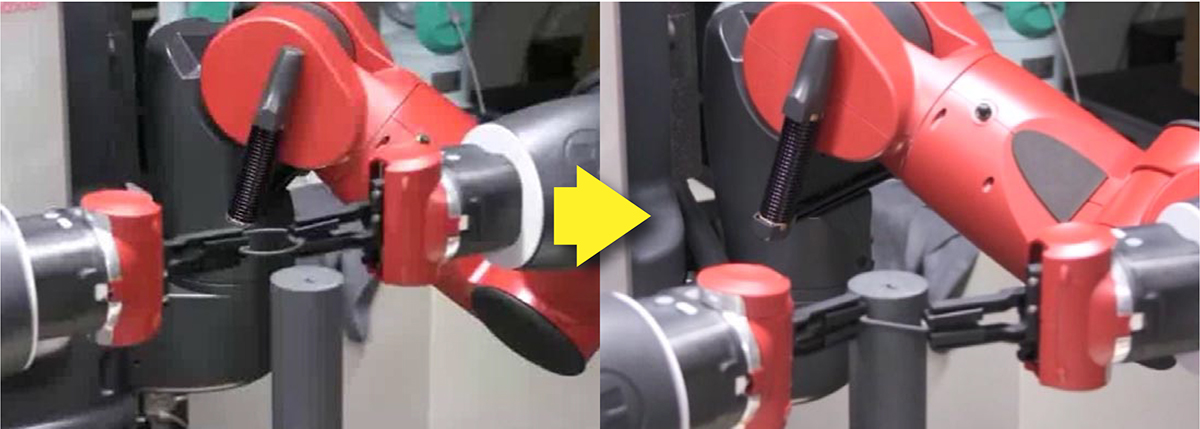

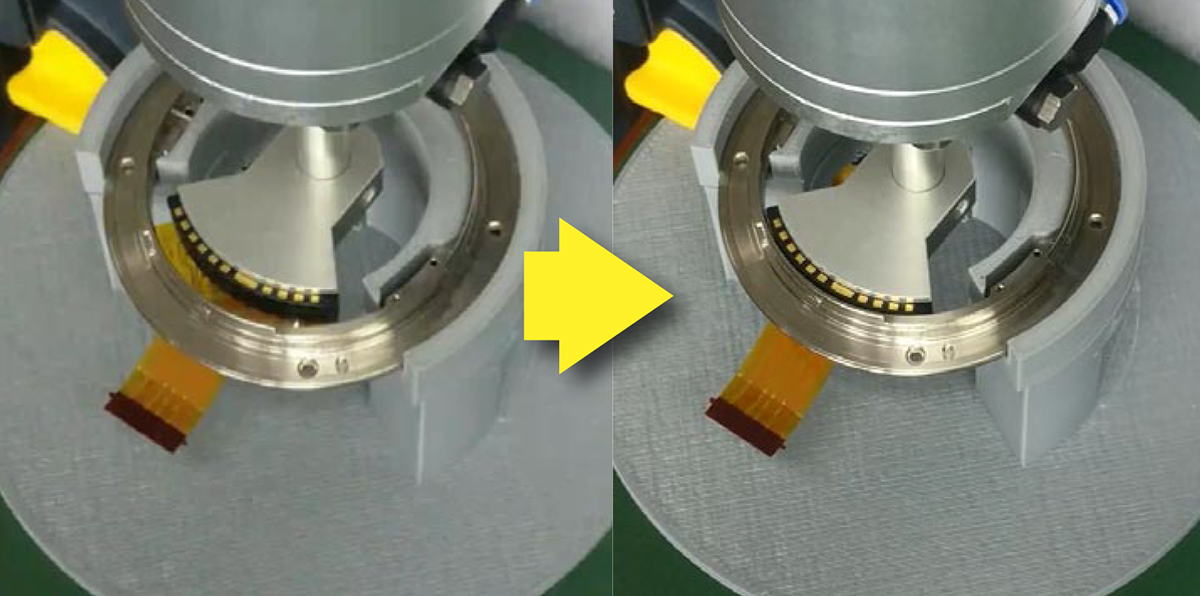

This section discusses the real-world applications of robotic manipulation research. One of the foremost areas where in which human tasks are expected to be performed by robots is the field of factory manufacturing processes. As depicted in “Modern Times” and other films, factory manufacturing is harsh on humans even today, with modern science and technology. This fact is especially noticeable in the assembly and inspection processes for high-mix low-volume manufacturing. Robotization of such a high-mix low-volume manufacturing process requires frequent task changes, which makes robotization difficult. Attempts have been made to introduce AI to industrial robots to solve these problems. Fig. 4 shows one such example of camera-lens assembly.5 By using reinforcement learning, we can effectively obtain the trajectory of the parts as well as the force control parameters.

Robotic manipulation research is also expected to impact our daily lives. Many countries, including Japan, are experiencing a rapid decline in birth rate and an aging population. The shortage of caregivers for the elderly and physically challenged has become a social problem. At this time, daily life assistance for tasks such as feeding, dressing, and bathing is very hard work, and it is hoped that robots will be able to replace humans to perform these functions. Moreover, elderly and physically challenged people in nursing homes are provided with individualized meals, and it is expected that the production and serving of these meals will also be automated. The robotic manipulation technology we are studying is critically important in order to automate these tasks.

Conclusion

In this article, we discussed robotic manipulation research from both academic and practical points of view. Robotic manipulation is a key technology for introducing robots into certain fields. With recent advancements in AI technology, robotic manipulation has also made significant advancements. We are working hard to introduce robots in many fields through the advancement of robotic manipulation technology.

References

1 Kensuke Harada, Tokuo Tsuji, and Jean-Paul Laumond, “A Manipulation Motion Planner for Dual-Arm Industrial Manipulators, “ Proceedings of IEEE International Conference on Robotics and Automation, pp. 928-934, 2014.

2 Weiwei Wan and Kensuke Harada: Integrated Assembly and Motion Planning using Regrasp Graphs, Robotics and Biomimetics, Vol. 3, No. 18, DOI 10.1186/s40638-016-0050-2, 2016.

3 Ixchel G. Ramirez-Aplizar, Kensuke Harada, and Eiichi Yoshida, “Motion Planning for Dual-arm Assembly of Ring-shaped Elastic Objects,” Proceedings of IEEE-RAS International Conference on Humanoid Robots, pp. 594-600, 2014.

4 Kosuke Fukuda, Natsuki Yamanobe, Ixchel Georgina Ramirez-Alpizar, Kensuke Harada, “Assembly Motion Recognition Framework Using Only Images,” Proceedings of IEEE/SICE International Symposium on System Integrations, pp. 1242-1247, 2020.

5 Cristian Beltran, Damien Petit, Ixchel Ramirez, Takamitsu Matsubara, Kensuke Harada, “Hybrid position-force control with reinforcement learning,” The 20th Annual Conference on SI Division of SICE, 2019.

*Please note: This is a commercial profile