On 6 January, 2021, the world watched as an angry crowd broke into the Capitol building – here, researchers explore the evolution of US extremist groups and how this moment of violence happened

The research team investigated how these online communities formed, especially focusing on the ‘Boogaloos’ in relation to what they already understood about extremism – from looking at online support for ISIS, a militant, terrorist organization based in the Middle East.

Firstly, who are the Boogaloos?

The Boogaloos are a loosely organised, pro-gun-rights, anti-police movement preparing for a future civil war in the US – which they believe will happen soon. They were one of the groups who orchestrated the capitol riot.

They were formed in 2019, and in 2020 became increasingly active across the country. They show up to anti-police protests in the wake of brutality, but as a group they are also connected to white supremacy. Early online movement in favour of the Boogaloos looked similar to the way online support works for ISIS – despite the immense differences in ideology, geography, and culture.

On the other hand, ISIS focuses on a specific ideology, a radicalised form of Islam, and is responsible for terrorist attacks across the globe.

‘Hidden common patterns’ could disrupt future extremism

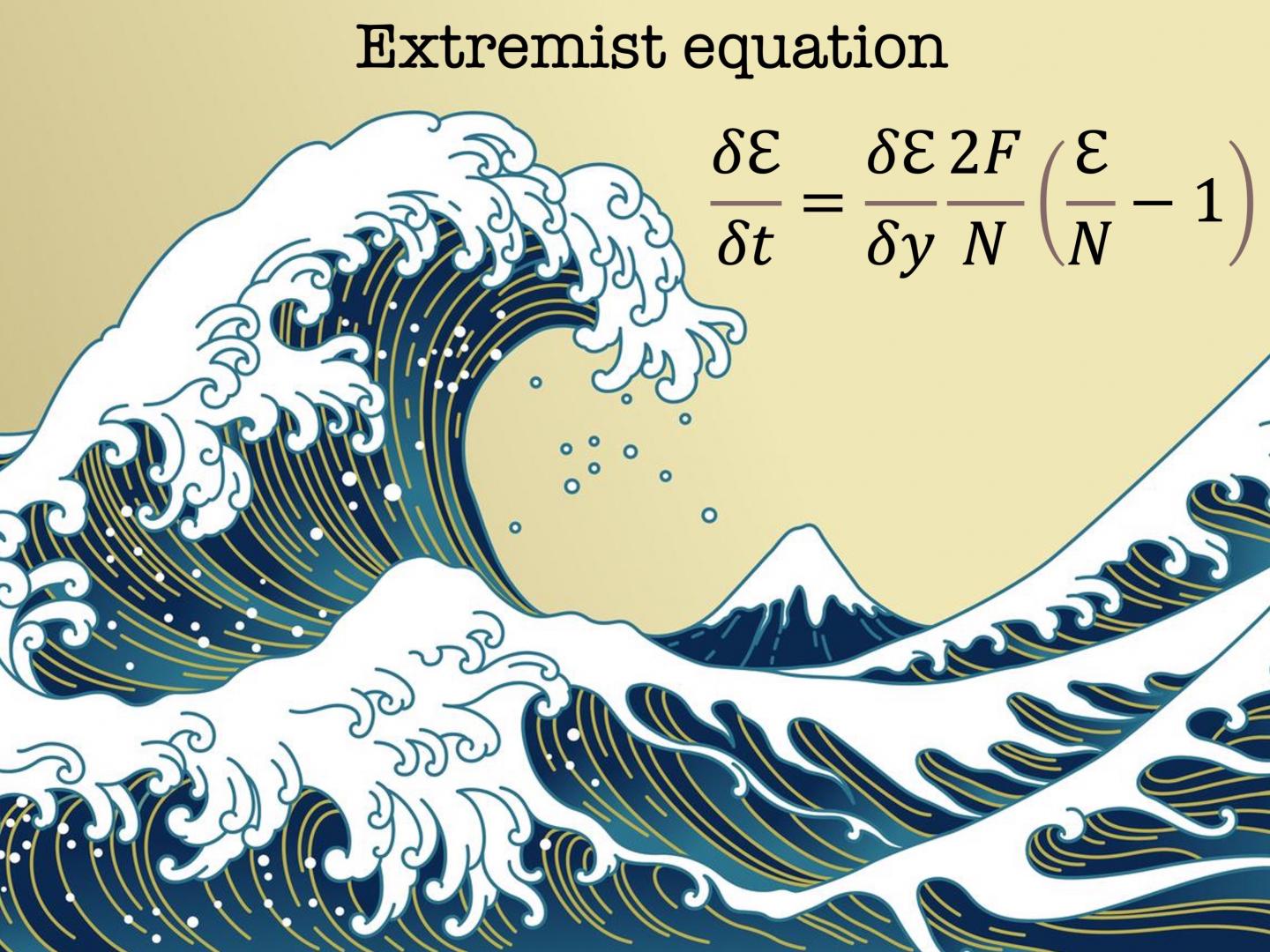

Professor Neil Johnson, a researcher at the George Washington University Institute for Data, Democracy & Politics, said: “This study helps provide a better understanding of the emergence of extremist movements in the U.S. and worldwide. By identifying hidden common patterns in what seem to be completely unrelated movements, topped with a rigorous mathematical description of how they develop, our findings could help social media platforms disrupt the growth of such extremist groups.”

Online communities cultivate real world violence

The team observed both communities on social media platforms. They found that specific policies may be necessary to limit the growth of extremist movements, like the Boogaloos and ISIS. They further emphasise that online extremism can, and often will, lead to real world violence.

In a separate study, anti-Asian hate crime versus online discussion of who was to blame for COVID showed a similar pattern of escalation.

But social media platforms are struggling to stop the growth of online extremism, as new, closed platforms sharing ideals welcome communities who leave mainstream platforms and individuals evolve the way they discuss their ideas. The combination of content moderation and actively promoting users who are engaged in counter messaging may not be enough to stop a future culmination of real world violence.

Yonatan Lupu, an associate professor of political science and co-author on the paper, said: “One key aspect we identified is how these extremist groups assemble and combine into communities, a quality we call their ‘collective chemistry’.

“Despite the sociological and ideological differences in these groups, they share a similar collective chemistry in terms of how communities grow. This knowledge is key to identifying how to slow them down or even prevent them from forming in the first place.”

![Europe’s housing crisis: A fundamental social right under pressure Run-down appartment building in southeast Europe set before a moody evening sky. High dynamic range photo. Please see my related collections... [url=search/lightbox/7431206][img]http://i161.photobucket.com/albums/t218/dave9296/Lightbox_Vetta.jpg[/img][/url]](https://www.openaccessgovernment.org/wp-content/uploads/2025/04/iStock-108309610-218x150.jpg)